Network Security at all levels

In this, the second of a multi-part series on securing your workloads, I'll look more broadly and why, where, and how, you should be securing your network. I'll look at both the principles and AWS services that help you reduce the risk of network based breaches. The introduction to the Security at all levels gives an overview of what I believe security in depth means and why we should all follow the principles.

So I've previously talked about NACLs and Security Groups (Here and Here) and their role in securing your workload. This post will go in to further details of controls that should be considered when deploying AWS services to ensure security of solutions. There is a fine line between network and application security. I will not talk about what I deem application security such as IAM Roles for services of application level restrictions such as rate limiting on a service. However I doe believe services such as WAF and Load Balancers are a network level control so will touch on them.

There are many possible ways to design and build everything in AWS. While none in themselves are wrong, there are definitely best practices that AWS recommends for various solutions and in some cases beginning to enforce. Some of these design decisions are often attributed to resilience or reliability, but I will touch on components where I think they can enhance a security posture.

Perimeter Security

Perimeter security, or edge security, is the traditional approach to security. Much like the bank example, it was just to secure the front door of the data centre and trust everyone, or packet, that you allowed to enter. In this ways early firewalls just worried about source and destination and the protocols being used. While often very long rules sets, in the early days firewalls were nothing more than an appliance that applied NACLs.

As threats increased firewalls became more intelligent and had the ability not only read the packet's IP header, but also inspect the packet content. This lead to enhanced capabilities, often referred to as Next Generation Firewalls. Amazon implements these capabilities in three services; AWS Web Application Firewall (WAF) and AWS Shield which were launched in 2015 and AWS Network Firewall which was released in 2020.

So how do we use these in a layered defence?

Well, the first thing we need to understand is how they operate. AWS WAF and Shield do not sit inside the VPC and can only be placed in front of certain service. AWS WAF is a specialised firewall implementation and is particularly focused on protecting a web service. So if you are using something like an ALB or CloudFront to present a website, API or other HTTP service it is best to protect it with WAF. As well as protecting using traditional ACLs it also has a variety of actions rather than just block and allow. In addition it has the ability to read the HTTP headers and body to analyse for conditions such as user agent of body content such as SQL code. So where you are using supported services and HTTP protocols WAF should be applied on traffic entering the VPC. Almost like vetting a guest before you invite them into the main lobby. AWS Shield again sits outside of the VPC but can protect a slightly larger set of services. In addition Shield protects against a different set of threats such as Distributed Denial of Service (DDOS) and vulnerability attacks. It also works at several layers of the OSI Stack and while integrates with WAF for layer 7 attacks can also use information from other layers to detect and respond to threat.

For AWS Network Firewall this sits outside of the VPC, but is accessed via a VPC endpoint, specifically a Network Firewall Endpoint. In this way it works the same as a self hosted firewall that is shared between VPCs using a gateway load balancer. As it sits inside the VPC it is accessed after any NACLs. While it can perform the same role as NACL in addition to its advance capabilities I would still recommend that NCALs are used. The reason for this is that for simple deny allow rules NACLs are significantly faster and come at no cost. The same traffic blocked on the firewall would come at a per GB rate.

VPC Layout

So VPC security is more than just having a NACL and some Security Groups. Having the correct subnet layout and other networking components can provide a greater risk reduction than just these two things alone.

So before VPC there was EC2-Classic Networking, a solution that in essence made everything a single public subnet. All instances got a public IP and could talk to and from what they wanted. The only thing to protect them was security groups, and one wrong config could have your resources compromised in less than 15 minutes.

With VPC there was the ability to only make public what needed to be. The power of invisibility is a great security tool. Moving to a private VPC in essence not only hid the targets similar to turning of ICMP (Ping, Traceroute) also meant their IPs were not even reachable.

So how many subnets to have? Many people are happy with a single public and private subnet per AZ. The challenge with this is that all hosts in a single subnet then rely on security groups for protection. If only very small networks serving a single service this might not be an issue. But where the networks are larger with multiple services or host this can cause issues. As with NACL vs Network Firewall, security groups place the load onto the instances to implement the security. As a result there are restrictions on security groups and how fast they can process data. By having multiple private subnets for different applications layers such as web, application, and data broad rules can filter out significant amounts of traffic from reaching hosts or services. This reduces the load on those host which in turn improves performance and can reduce costs.

Security Group Sizing

Apart from the size and scope of a subnet, how you use security groups can have a big impact on the blast radius of security risk.

I have seen many people put all hosts for a service in a single security group and allow any traffic from resources that are assigned to the security group. In essence the same rule as a default security group. The challenge with this is that if a single component of the solution is compromised, either directly or through the SDLC, it can now reach all other resources that are part of a solution.

For me, as with subnets, at a minimum each layer of the application should have its own security group with the minimum set of rules that are needed. Ideally, apart from the initial entry point all rules should reference other security groups. So the ALB might allow inbound on port 443 from 0.0.0.0/0 and outbound on port 443 to the security group around the EC2 instances. Then as we go down the chain we only allow inbound and outbound on specific ports to and from other security groups.

This way the target for attacks become very limited, and more importantly known. As a result of this other measures can be put in place to protect those interactions.

Security through Routing

As with managing what resources in a VPC can be seen, managing what those resources can see and get to is another method to provide security. This comes in many forms from basic route table manipulation to the use of AWS service to mitigate network connectivity.

First, similar to NACLS and Subnets is having specific route tables that only expose the relevant routes for each tier. For example if there is no outbound traffic, and all inbound traffic should come in via a load balancer in the public subnet, private subnets should only have local routes.

Second, is the use of VPC endpoints. Whether to an AWS service or a private service this bypassed the need for direct network connectivity. This solution ensures that all traffic between the VPC and the destination service remain on the AWS backbone. In addition it allows for a policy to be placed on the VPC endpoint to determine who can use it, as well as being a principle that can be used in other resource policies. For example I could implement an S3 endpoint and apply a VPC Endpoint Policy that only allows a specific IAM Role permission to use it. I can then also create an S3 Bucket Policy that only allows particular actions if they come from a particular VPC Endpoint. In doing so I now say all traffic to S3 has to go via a private link, control who/what can use that link, and also protect the resource from being access by principles that are not using that VPC endpoint.

The third is managing access to the internet for public resources. While a basic component of VPC Networks, Internet Gateways, NAT Gateways, and Egress Only Internet Gateways, are important tools to protect resources at a network level. As with the route tables, only allowing outbound network access to those that need it, and by the relevant gateway, protects the resources from both direct attack and from malicious sites.

Conclusion

So what would my final solution look like?

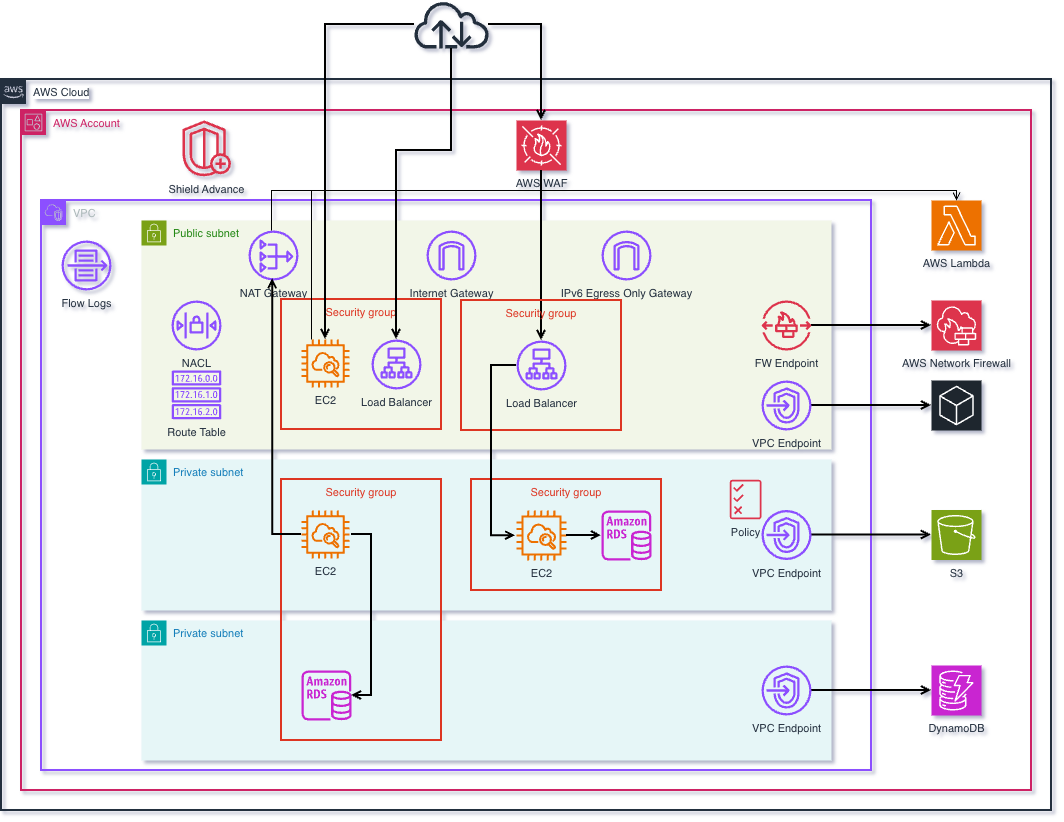

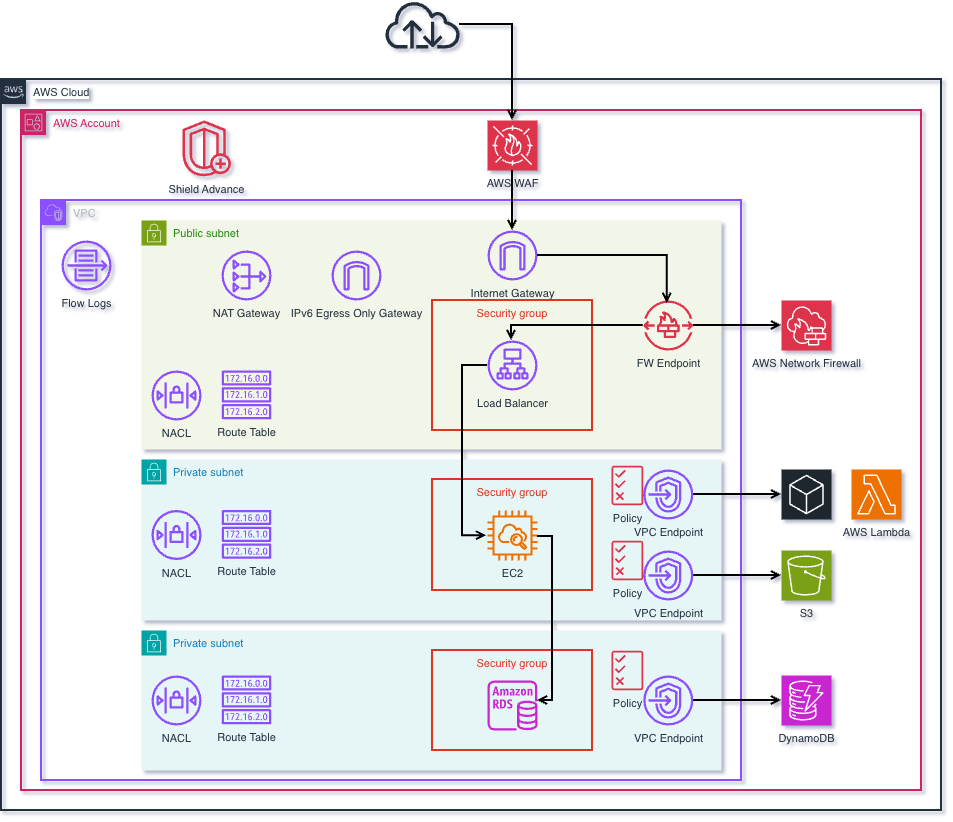

The diagram below show how I would design and deploy a solution if the requirement was to have the most robust security. It comes at a cost, from AWS resources, increased development activities, and operational support, so a risk analysis would be needed to understand if the cost to implement all components is justifiable or just portions are needed. I would however argue that if there is not an increase in AWS cost the development and operational cost are insignificant compared to the opportunity cost of a security incident.

So lets look at my solution in a little detail.

Firstly the idea is to scope down the traffic at every step in the network. This starts with Shield and WAF at the network edge, and continues with an AWS Network Firewall at the VPC edge. This ensures that only valid traffic reaches the service entry point. As stated I'd ideally only have a load balancer (with a security group) as the VPC entry point and have CloudFront as the external entry point. This way access to the load balancer can be scoped to Cloud Front. In this scenario traffic has been through 6 layers by the time the load balancer processes.

Second is to then segment the layers and secure each independently. This is achieve through a tiered subnet approach in combination with NACLs and Security Groups that only allow traffic between the relevant layers. A methodology that can also be applied to larger networks with layers such as management and inspection. NACLs provide the broad brush strokes such as only HTTPS in, or only HTTPS or DataBase ports out, while security group in the layers provide service specific rules.

Third is to restrict as much traffic to the AWS network as possible by using VPC endpoint. This not only reduces the risk of sending traffic over the internet but also provides additional tooling to manage the traffic.

Finally, and one I'll look at in an SDLC post, is to remove traffic completely. For example, rather than securing access to the internet for instances to patch move the patching to AWS Systems Manager Patch Manager and make the traffic private to AWS. Alternatively implement the use of golden AMI's that are updated and released each week removing the need for individual services to perform patching.

As an architect I know there are always multiple methods to achieve the same goal. What are your thoughts on this approach?

Is there are better way to do some of the same thing I have suggest?

As always interested to learn alternative methods as I am to share my thoughts.

Member discussion